The Problem

Oak Network's SDK adoption was stalling. Every new partner had to manually read documentation, write integration boilerplate, handle Oak's Result error pattern, set up webhook handlers, and submit a PR — a process that routinely took days, required deep SDK knowledge, and generated inconsistent code. Campaign managers faced the same friction on the other side: configuring multi-market payment flows, treasury models, and smart contracts required technical expertise most founders didn't have.

The Solution

Oak Autopilot is a dual-pipeline multi-agent platform. The Integration Engine deploys AI agents that analyze a GitHub repository, plan file-level changes, generate SDK-compliant code, and open a pull request automatically. The Campaign Dashboard lets founders configure payment providers, treasury models, and cross-platform listings through a conversational AI interface — no technical knowledge required.

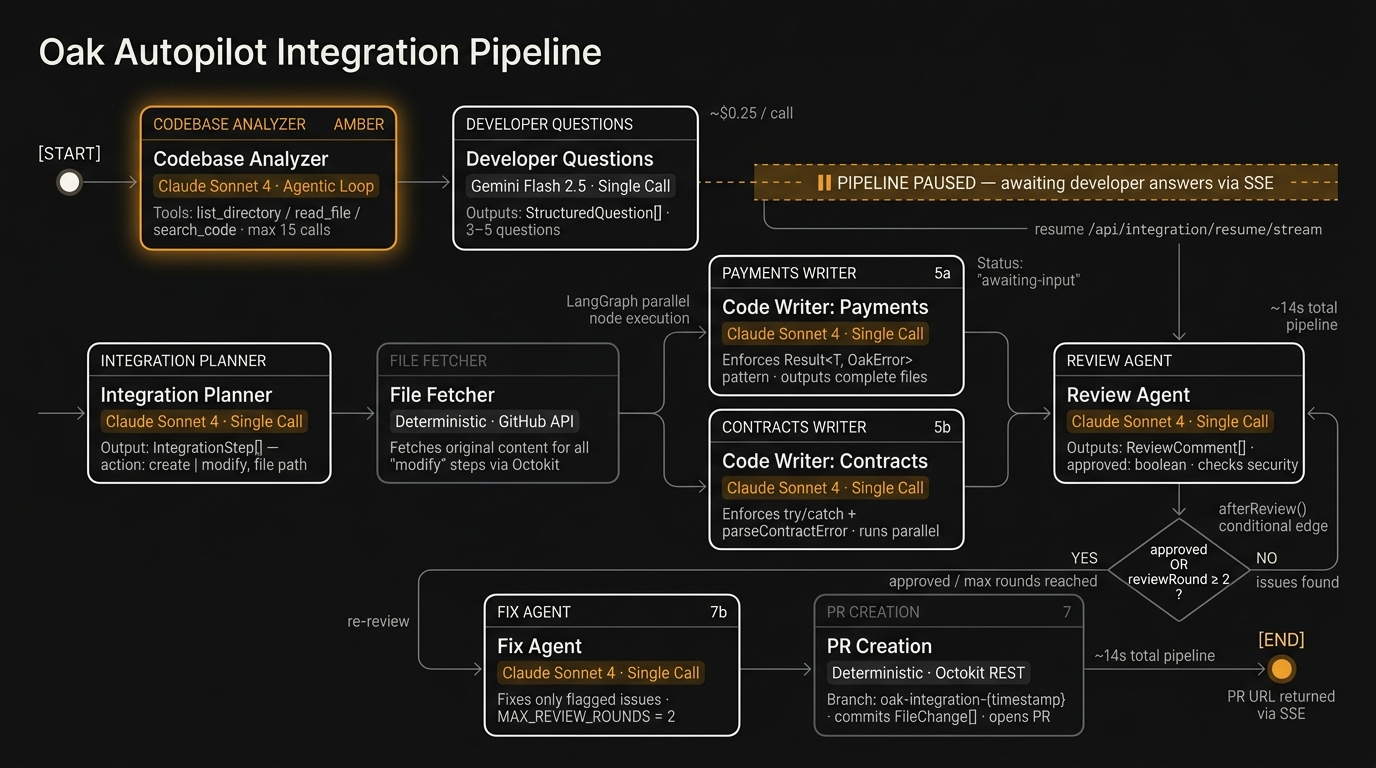

Integration Pipeline — full agent flow with LangGraph routing, parallel execution, and review loop

Integration Pipeline — full agent flow with LangGraph routing, parallel execution, and review loop

From GitHub URL and requirements to a ready-to-merge pull request with full SDK integration code.

4 dashboard agents and 7 integration agents — each with a defined role, model assignment, and tool set.

Separate state graphs for integration (7 nodes, conditional routing) and dashboard (4 nodes, parallel branches).

Full pipeline run with multi-model tiering across Claude Sonnet, Gemini Flash 2.5, and Claude Haiku.

Two Pipelines, One Platform

The system is built on two independent LangGraph.js state graphs, each managing a separate concern. Both share the same underlying LLM abstraction layer, streaming infrastructure, and knowledge bases — but differ fundamentally in their orchestration patterns.

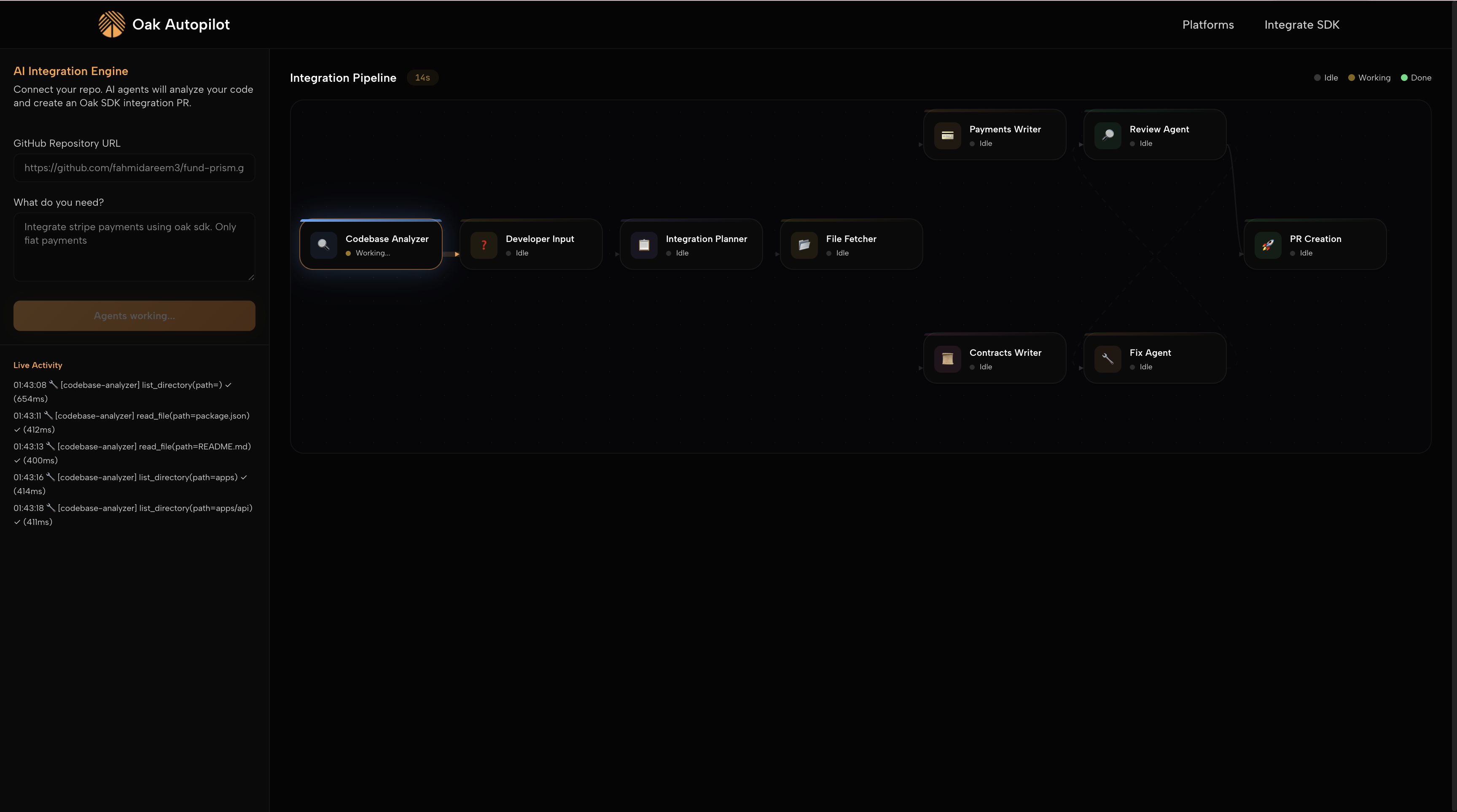

Integration Pipeline in action — Codebase Analyzer working, downstream agents idle, live tool call log on left

Integration Pipeline in action — Codebase Analyzer working, downstream agents idle, live tool call log on left

Analyzer

Questions

Planner

Writer

Writer

Agent

Creation

Setup

Flow

Agent

Resolver

Stack Overview

| Layer | Technology | Role |

|---|---|---|

| Frontend | Next.js 14, React 18, Tailwind CSS | Dashboard UI + real-time pipeline visualization |

| Backend | Express.js + TypeScript | REST + SSE endpoints, agent orchestration host |

| Orchestration | LangGraph.js | State graph execution, conditional routing, node management |

| LLM Layer | Anthropic SDK, Google Generative AI | Multi-provider fallback with per-agent model assignment |

| Code Delivery | Octokit REST API | Branch creation, file commits, PR opening |

| CMS | Sanity Studio v3 | Campaign config persistence and dashboard rendering |

| Streaming | Server-Sent Events (SSE) | Real-time agent progress, tool call transparency to frontend |

Integration Pipeline — 7 Agents

The integration pipeline runs in two phases separated by a human-in-the-loop pause. Phase 1 analyzes the codebase and surfaces targeted questions. Phase 2 takes developer answers and produces working, reviewed, production-ready code.

list_directory, read_file, search_code tools to autonomously explore the repo. Detects framework, language, existing payments, DB/ORM, auth patterns, and coding style. Limited to ~12–15 tool calls to control cost.

IntegrationStep[] specifying which files to create or modify — never deletes or rewrites existing code.

result.ok before accessing result.value. For modifications, receives full original file content and returns a complete updated file.

parseContractError error handling. Runs in parallel with Payments Writer via LangGraph parallel execution.

ReviewComment[] and an approved flag.

MAX_REVIEW_ROUNDS = 2) before forcing PR creation with review notes included.

oak-integration-{timestamp}, commits all FileChange[], and opens a PR with generated title, requirements summary, modified file list, review comments, and frontend deployment next steps.

Dashboard Pipeline — 4 Agents

The dashboard pipeline is conversational. The Campaign Setup agent gathers requirements through natural language, then payment and treasury agents run in parallel to produce a complete, persisted configuration.

test_payment_route and get_provider_fees tools. Selects providers per market: Stripe (US), PagarMe/PIX (Brazil), MercadoPago (Colombia). Recommends on-ramps (Bridge) and off-ramps (Avenia). ~$0.08/call.

Key Engineering Decisions

Selective Agentic Loops

Not all agents use tool-use loops. Only agents that require genuine exploration — the Codebase Analyzer (doesn't know which files to read upfront) and the Payment Flow agent (must test routes in sandbox) — run as true agentic loops. The remaining 8 agents use single LLM calls with structured outputs. This keeps costs predictable and latency low while enabling autonomy exactly where it's needed.

Human-in-the-Loop as a First-Class Feature

The integration pipeline deliberately pauses after codebase analysis for developer input. Rather than making assumptions about payment coexistence strategy, webhook placement, or treasury preference, the system surfaces 3–5 targeted questions. This design choice significantly improves code quality: the generated integration is aligned with the developer's actual intent, not the model's best guess.

Multi-Model Tiering for Cost Control

Each agent is assigned the minimum model needed for its task. Reasoning-heavy agents (code generation, review, planning) use Claude Sonnet. Structured output generation (questions, treasury recommendations) uses Gemini Flash 2.5 at a fraction of the cost. Conversational agents use Claude Haiku. A fallback chain automatically switches to Claude Haiku if the Gemini budget is exhausted. This reduces cost ~40% vs. using Sonnet throughout.

SSE Streaming for Pipeline Transparency

All pipeline execution is streamed to the frontend via Server-Sent Events. Clients receive agent events (agent messages with status), tool_call events (tool name, duration in ms, success flag), and complete events. This lets the UI render the exact pipeline state in real-time — users see which agent is running and every tool call as it executes. The 14-second integration time is visible, not a black box.

Non-Destructive Code Modification

The Integration Planner plans at the file level: each step is tagged as create or modify. For modify actions, the File Fetcher node retrieves the current file content from GitHub before passing it to the code writers. Writers receive the original file and output a complete updated file — the original code is never dropped. This guarantees integrations don't break existing functionality.

What Was Built and What It Proved

Oak Autopilot demonstrated that the hardest part of SDK adoption isn't the SDK — it's the friction of integration. By treating the integration process as an orchestrated multi-agent workflow, it reduced a multi-day developer task to a 14-second automated pipeline with a human review checkpoint.

- Full SDK integrations generated in ~14 seconds, from repo URL to open pull request

- 4–7 files created or modified per integration, with complete TypeScript types and Oak error handling patterns enforced

- Automated review loop catches and fixes security issues (hardcoded secrets, missing auth middleware, incorrect Result patterns) before the PR is opened

- Multi-market payment configuration — US, Brazil, Colombia — handled conversationally with provider testing and fee transparency

- Real-time pipeline visualization via SSE makes the AI process observable and inspectable, not a black box

- $0.61 per integration with multi-model tiering vs. an estimated $1.05 with Sonnet throughout

What I Would Do Differently

The Codebase Analyzer's 12–15 tool call limit is a blunt heuristic. A smarter approach would be to let the agent build a directory map first (one list_directory call at root), then selectively read only files that match heuristic patterns — reducing tool calls by 40–60% while reading the same semantically important files.

The Fix Agent's max-2-rounds limit can silently ship PRs with unfixed review issues. A better design would let the Review Agent classify issues as blocking vs. advisory, and only force PR creation when no blocking issues remain.