Two Platforms, One Infrastructure Codebase

The infrastructure work spans two distinct platforms, both fully managed with Terraform and deployed on AWS. The first is a Kubernetes-native microservice platform — the Microservice stack — running on EKS with a full GitOps deployment model. The second is a Saleor-based e-commerce checkout platform running on ECS Fargate, with CloudFront CDN, multi-service Redis, and a RDS PostgreSQL backend shared across three application tiers.

Every AWS resource across both platforms — networking, compute, databases, caching, CDN, secrets, IAM, DNS — is declared in Terraform using a reusable module library. Environments (dev, stage, prod) are separate root configurations that share modules but define their own variable bindings. State lives in S3 with environment-isolated prefixes. There is no console-clicking in production.

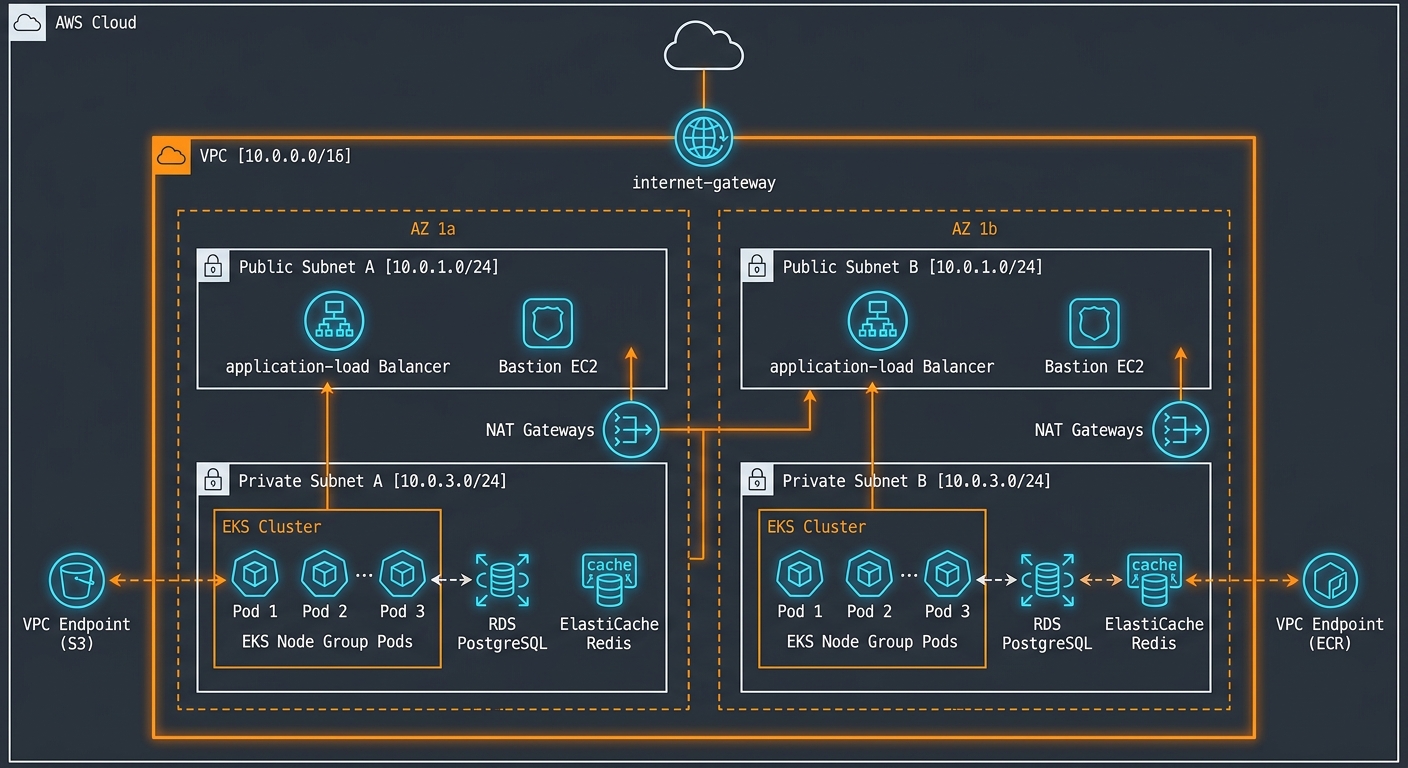

Full platform architecture — VPC layout across two AZs, EKS node groups, RDS PostgreSQL, ElastiCache Redis, and VPC endpoints for S3 and ECR

Full platform architecture — VPC layout across two AZs, EKS node groups, RDS PostgreSQL, ElastiCache Redis, and VPC endpoints for S3 and ECR

EKS-based microservice platform and ECS Fargate-based checkout platform — each with independent staging environments.

Reusable modules for VPC, EKS, ECS, RDS, Redis, ALB, CloudFront, IAM, SOPS, bastion, and Route53.

All workloads span us-east-1a/b/c with private/public subnet separation, NAT gateways, and VPC endpoints for S3 and ECR.

Container images for payflow, brandkit, prospector, listing-sync, saleor, fundgate, mailer, discuss, and more.

Terraform Module Library

Rather than writing environment-specific code, all infrastructure is composed from typed, parameterized modules. The module library covers every layer of the stack:

CIDR allocation, DNS hostnames, DNS resolution, resource tagging

AZ-aware subnet provisioning with Kubernetes cluster discovery tags (kubernetes.io/role/elb)

Elastic IP allocation, NAT gateway, private route table association

Internet gateway, public route table with 0.0.0.0/0 and ::/0 egress

EKS cluster v1.32, OIDC provider, EBS CSI driver addon, cluster role binding

Node group with instance type, min/max/desired capacity, and Kubernetes labels

PostgreSQL RDS with parameter groups, subnet groups, SG, backup windows, performance insights

ElastiCache cluster with engine version, node type, subnet group, and SG rules

AWS MQ broker (mq.m7g.medium), RabbitMQ 3.13, single-AZ, private subnet

ECS service + ALB target group + listener rules + auto-scaling + log group

Task definition factory: CPU, memory, container spec, secrets injection, log driver config

External ALB with dual-stack (IPv4/IPv6), HTTP→HTTPS redirect, ACM cert, SG rules

Customer-managed KMS key with key policy, alias, and rotation config

Batch Secrets Manager secret creation with per-secret IAM policy generation

GitHub Actions OIDC provider + federated IAM role for keyless CI/CD credential vending

CloudWatch alarm → SNS → Lambda → Slack webhook for operational alerts

WAF Web ACL with IP-based rule sets (currently provisioned as standby)

EC2 bastion in private subnet with SG allowing SSH from VPC CIDR only

VPC Design

Both platforms follow an identical network architecture pattern: a single VPC per environment, three private subnets and three public subnets across us-east-1a/b/c, one NAT gateway per public subnet, and an Internet Gateway for public egress. All workloads — EKS nodes, ECS tasks, RDS instances, ElastiCache clusters — live in private subnets. The ALBs and bastion host are the only resources in public subnets.

10.20.0.0/1610.20.1.0/24 us-east-1a10.20.2.0/24 us-east-1b10.20.3.0/24 us-east-1c10.50.0.0/1610.50.0.0/18 us-east-1b10.50.64.0/18 us-east-1c10.50.128.0/18 us-east-1a10.50.232–248.0/21 (AZs a/b/c)10.51.0.0/16VPC Endpoints for Cost and Security

EKS nodes and ECS tasks pull container images from ECR and write to S3 in the critical path of every deployment and file upload. Without VPC endpoints, this traffic would exit via NAT gateways, incurring both latency and NAT data-processing costs at scale. Both platforms have S3 gateway endpoints (free, route-table-based) and ECR.dkr interface endpoints (private DNS enabled, eliminating NAT for image pulls entirely).

VPC layout — public subnets (ALB + Bastion EC2), private subnets (EKS pods, RDS, ElastiCache), NAT gateways per AZ, VPC endpoints for S3 and ECR

VPC layout — public subnets (ALB + Bastion EC2), private subnets (EKS pods, RDS, ElastiCache), NAT gateways per AZ, VPC endpoints for S3 and ECR

DNS and TLS

Route53 zones are managed at an account level and referenced via Terraform remote state in environment configs. Each environment creates ALIAS records pointing to its ALB (evaluate-target-health enabled for failover), with ACM certificates provisioned per environment. The checkout platform runs dual-stack (IPv4 + IPv6) ALBs with HTTP-to-HTTPS redirect on port 80.

| Resource | Stage | Prod |

|---|---|---|

| VPC CIDR (EKS) | 10.20.0.0/16 | 10.21.0.0/16 |

| VPC CIDR (ECS) | 10.50.0.0/16 | 10.51.0.0/16 |

| RDS Instance (EKS) | db.t3.medium | Multiple instances (platform, CS, sandbox) |

| RDS Instance (ECS) | db.t3.small | db.t3.medium |

| ElastiCache | cache.t3.micro | cache.t3.small |

| EKS Nodes | t2.large (min 2, max 10) | t2.large (dedicated node groups) |

| NAT Gateways | 1 per AZ | 1 per AZ |

| WAF | Provisioned (standby) | Active |

EKS Platform — Kubernetes Orchestration

The Microservice microservice platform runs on EKS v1.32. Two clusters are provisioned: infra-stage (handles both dev and staging workloads) and infra-prod. Each cluster has managed node groups on t2.large instances with autoscaling (min 2, max 10, desired 2 per group). The EBS CSI driver addon is installed via Terraform for persistent volume support.

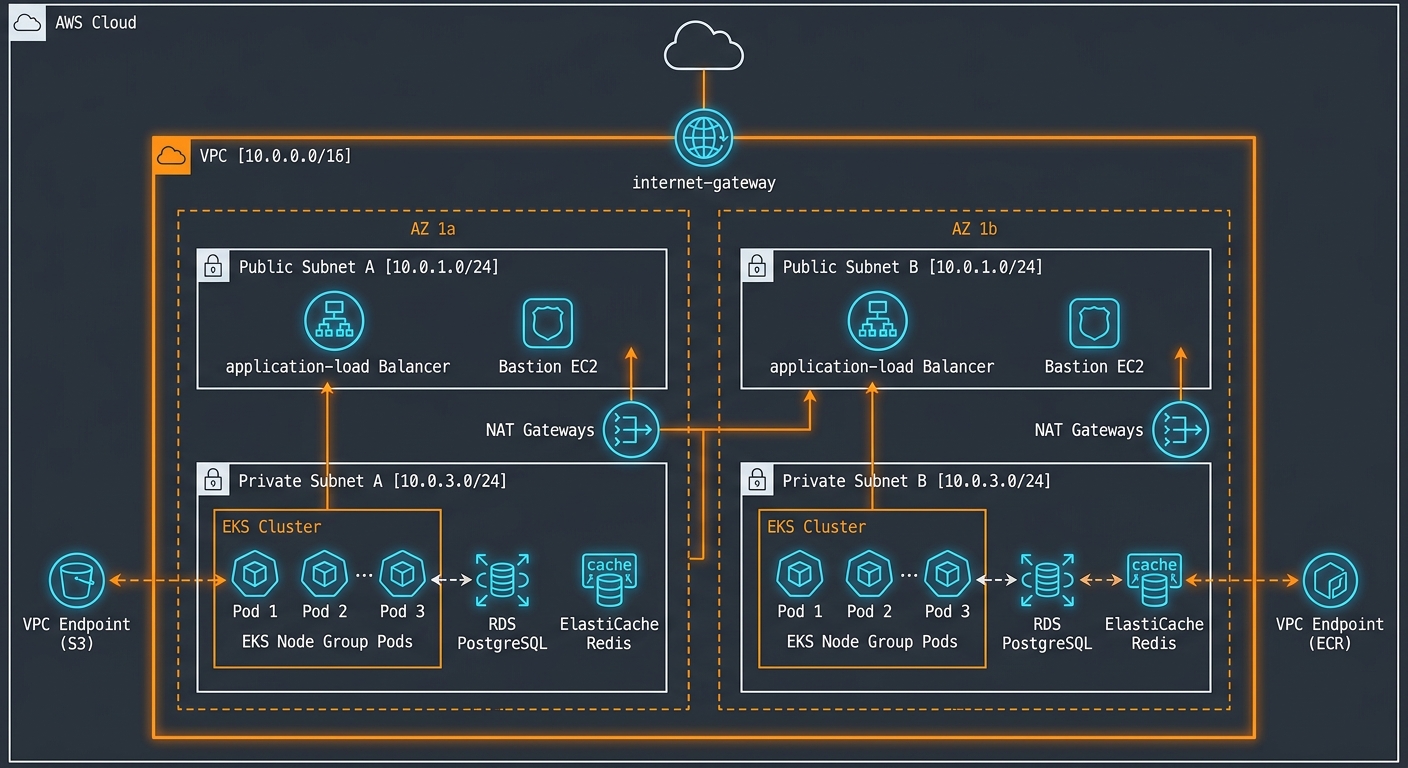

Service routing is handled by Traefik IngressRoute CRDs. Kubernetes manifests for each service live in the deploy/ directory, namespaced by service and environment. Services deployed to the platform include: payflow, brandkit, prospector, listing-sync-service, billing-sub, microfund, and standalone.

EKS cluster — Traefik IngressRoute dispatching to service namespaces, SOPS-encrypted secrets per namespace, OIDC-authenticated GitOps pipeline with ECR image pulls

EKS cluster — Traefik IngressRoute dispatching to service namespaces, SOPS-encrypted secrets per namespace, OIDC-authenticated GitOps pipeline with ECR image pulls

ECS Fargate — Checkout Platform

The Saleor e-commerce checkout stack runs on ECS Fargate — no EC2 nodes to manage, no AMI patching, and precise per-task CPU/memory billing. The platform runs four primary service groups:

/health/. Execute command enabled for live container debugging. Image tagged with semantic version (3.21.1-stg).

app_checkout). Secrets injected via Secrets Manager ARN references.

storefront_dashboard), separate ALB, Route53 record, and CloudFront distribution. Runs on Redis DBs 4–5.

ses:SendRawEmail.

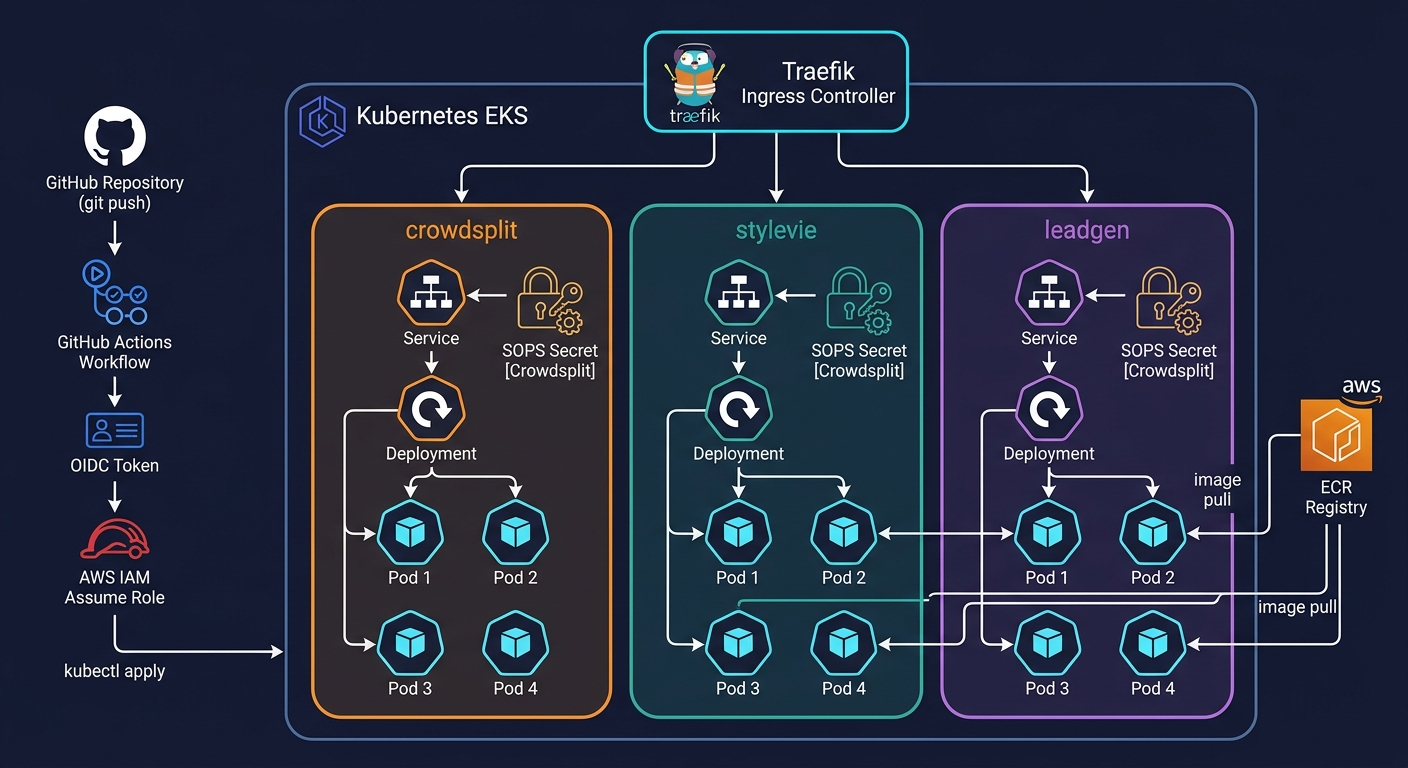

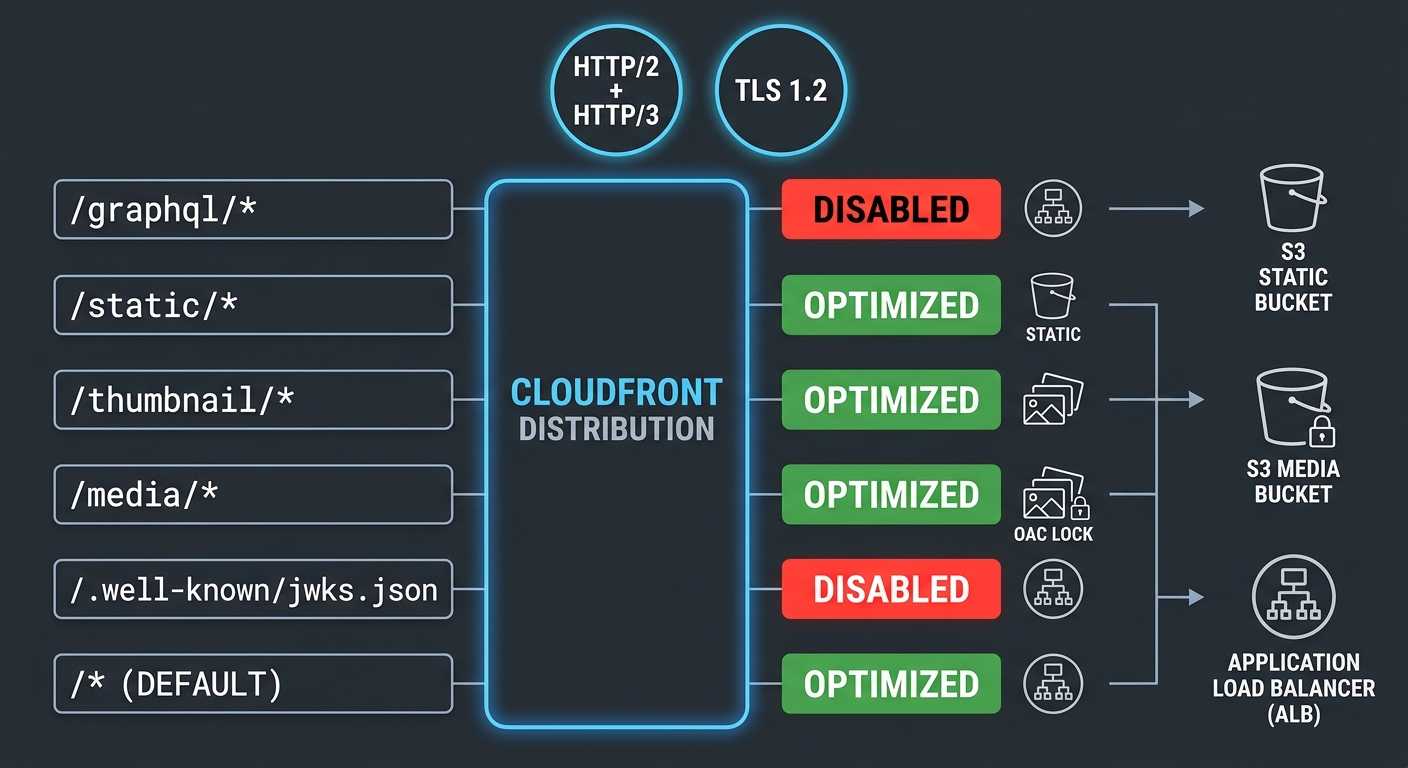

CloudFront CDN — Cache Behavior Architecture

Two CloudFront distributions per environment (Saleor + Storefront) sit in front of the ALBs and S3 origins. The cache behavior rules are the most critical part of the CDN config — different paths have radically different caching requirements and the wrong default would either serve stale API responses or miss static asset caching entirely.

| Path Pattern | Origin | Cache Policy | Reason |

|---|---|---|---|

/graphql/* |

ALB (API) | CachingDisabled | GraphQL mutations and user-specific queries must never be cached |

/static/* |

S3 static bucket | CachingOptimized | Immutable build assets — content-hashed filenames, aggressive cache TTL |

/thumbnail/* |

S3 media bucket | CachingOptimized | Generated thumbnails are deterministic given the same source + dimensions |

/media/* |

S3 media bucket | CachingOptimized | User-uploaded media. OAC enforces S3 access via CloudFront principal only |

/.well-known/jwks.json |

ALB (API) | CachingDisabled | JWT public keys — must be fresh for token verification |

/* (default) |

ALB (API) | CachingOptimized | Remaining paths optimized; HTTP/2+3, Gzip+Brotli compression enabled globally |

CloudFront cache behavior routing — per-path policies, S3 static/media origins with OAC, ALB API origin with caching disabled for GraphQL and JWKS

CloudFront cache behavior routing — per-path policies, S3 static/media origins with OAC, ALB API origin with caching disabled for GraphQL and JWKS

S3 origin access is enforced with Origin Access Control (OAC) using SigV4 signing, replacing the legacy OAI pattern. Bucket policies reject all direct S3 requests and only allow the CloudFront service principal. TLS minimum: TLSv1.2_2021. HTTP versions: HTTP/2 and HTTP/3.

Data Layer

PostgreSQL 16 runs on RDS with parameter groups that enable query logging and set max_connections = 1000. ElastiCache Redis serves multiple logical databases per cluster: DBs 0–5 are partitioned across Saleor cache, Celery broker, FundGate, and Storefront to avoid key namespace collisions while sharing a single node in staging. The EKS platform uses Redis 6.x; the ECS checkout platform uses Redis 7.1 with dual-stack networking. RabbitMQ (AWS MQ, mq.m7g.medium) handles async messaging for event-driven services.

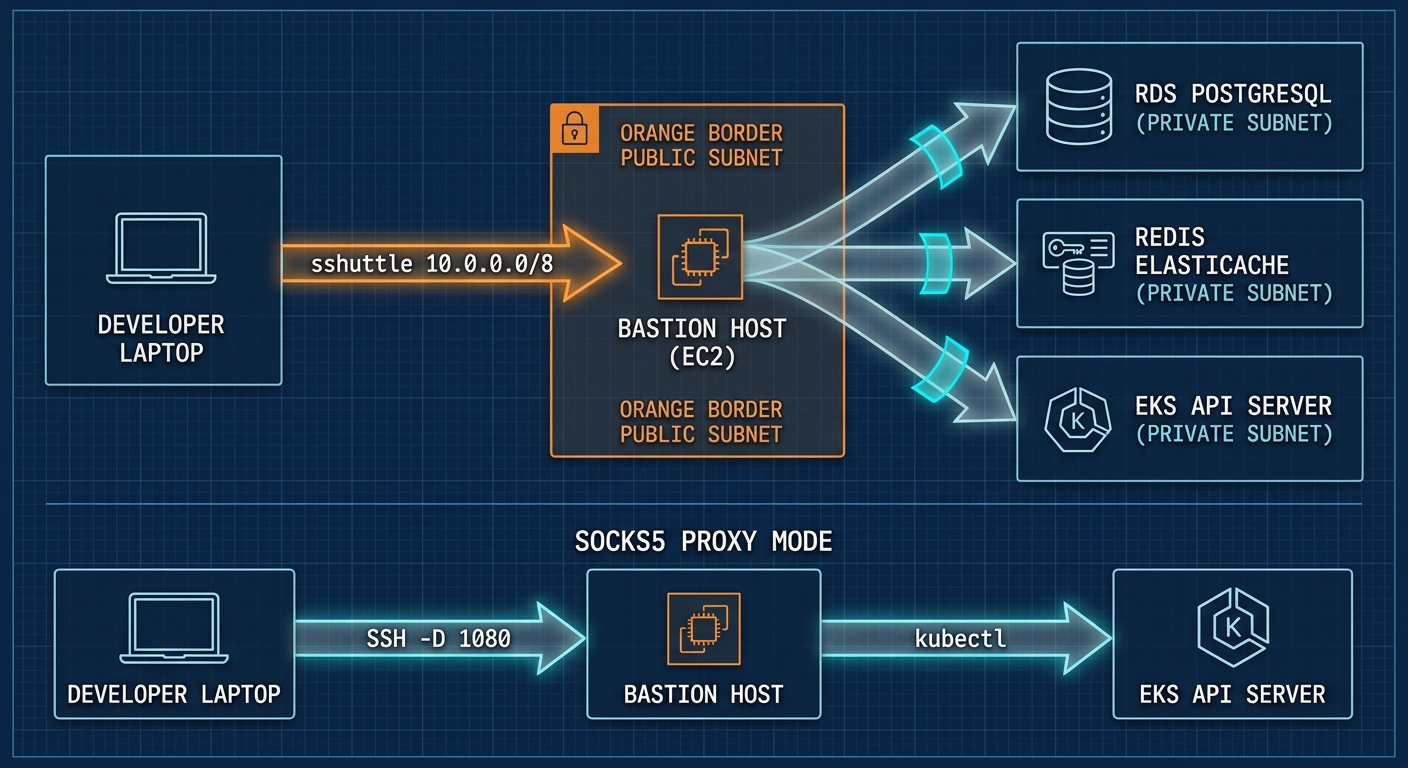

Bastion Host Architecture

Private cluster resources — RDS, Redis, EKS API, RabbitMQ — are not reachable from the public internet. The access pattern uses a bastion host in each environment's public subnet as the sole ingress point. There are two usage modes depending on whether you need full network routing or just proxy access:

Establishes a transparent L3 VPN over SSH: sshuttle -r deploy@<bastion-ip> -e "ssh -i <key>" 10.0.0.0/8. Routes the entire 10.0.0.0/8 supernet through the bastion — meaning psql, redis-cli, and kubectl all work transparently from your local machine as if you were inside the VPC. This is the standard operational mode for database migrations and cluster maintenance.

Creates a SOCKS5 proxy: ssh -D 1080 -N deploy@<bastion-ip> then export HTTPS_PROXY=socks5://127.0.0.1:1080. Lighter-weight option for kubectl-only access without routing the full supernet. Used when only EKS API access is needed.

SSH public keys are managed via pull requests to the bastion-access repository. Adding or revoking a developer's access requires a PR review — no direct console access, no shared credentials. Separate bastion instances serve stage and production, with the prod bastion exposed via a DNS alias rather than a raw IP.

Bastion access — sshuttle routing 10.0.0.0/8 through the EC2 bastion for transparent VPC access (top), and SOCKS5 proxy mode for kubectl-only traffic (bottom)

Bastion access — sshuttle routing 10.0.0.0/8 through the EC2 bastion for transparent VPC access (top), and SOCKS5 proxy mode for kubectl-only traffic (bottom)

SOPS + KMS Encrypted Secrets

All Kubernetes secrets — database DSNs, API keys, payment credentials, JWT secrets — are stored as SOPS-encrypted ConfigMaps in Git. A customer-managed KMS key provisioned by Terraform handles encryption, with grants managed via IAM. The GitHub Actions deploy workflow decrypts manifests at deploy time using the KMS key vended via OIDC — no plaintext secrets ever appear in the repo or CI logs. The secrets Terraform module provisions Secrets Manager secrets for ECS workloads using the same IAM-controlled access pattern.

GitHub Actions OIDC — Keyless CI/CD

There are no long-lived AWS access keys in GitHub Actions. The github-oidc Terraform module provisions an AWS IAM OIDC identity provider bound to token.actions.githubusercontent.com and creates a federated IAM role that GitHub Actions can assume for specific repositories (acme-platform/*). The role has least-privilege policies: ECR describe/push, EKS describe, KMS decrypt for SOPS. Credentials are valid only for the duration of the workflow run and are scoped to the specific repo and branch.

| IAM Principal | Permissions | Scope |

|---|---|---|

| GitHub Actions OIDC role | ECR push/describe, EKS describe, KMS decrypt | acme-platform/* repos only |

| EKS Node role | AmazonEKSWorkerNodePolicy, ECR pull, VPC CNI | EKS worker nodes only |

| ECS task role (Saleor) | S3 GetObject/PutObject/Delete (media), Secrets Manager GetSecretValue, DynamoDB CRUD (avatax) | Scoped to per-service S3 prefixes and secret ARNs |

| ECS task role (Celery) | S3 read/write (media bucket), CloudWatch PutLogEvents | Media bucket only |

| SES SMTP user | ses:SendRawEmail | Mailer service only, via access key in Secrets Manager |

EKS RBAC — aws-auth ConfigMap

Kubernetes RBAC is managed via the aws-auth ConfigMap, also version-controlled in the repo. Ten IAM users are mapped to the system:masters group, granting cluster-admin access. Node roles are mapped to system:bootstrappers and system:nodes for kubelet registration. Any RBAC change goes through code review — there is no ad-hoc kubectl edit configmap aws-auth pattern in production.

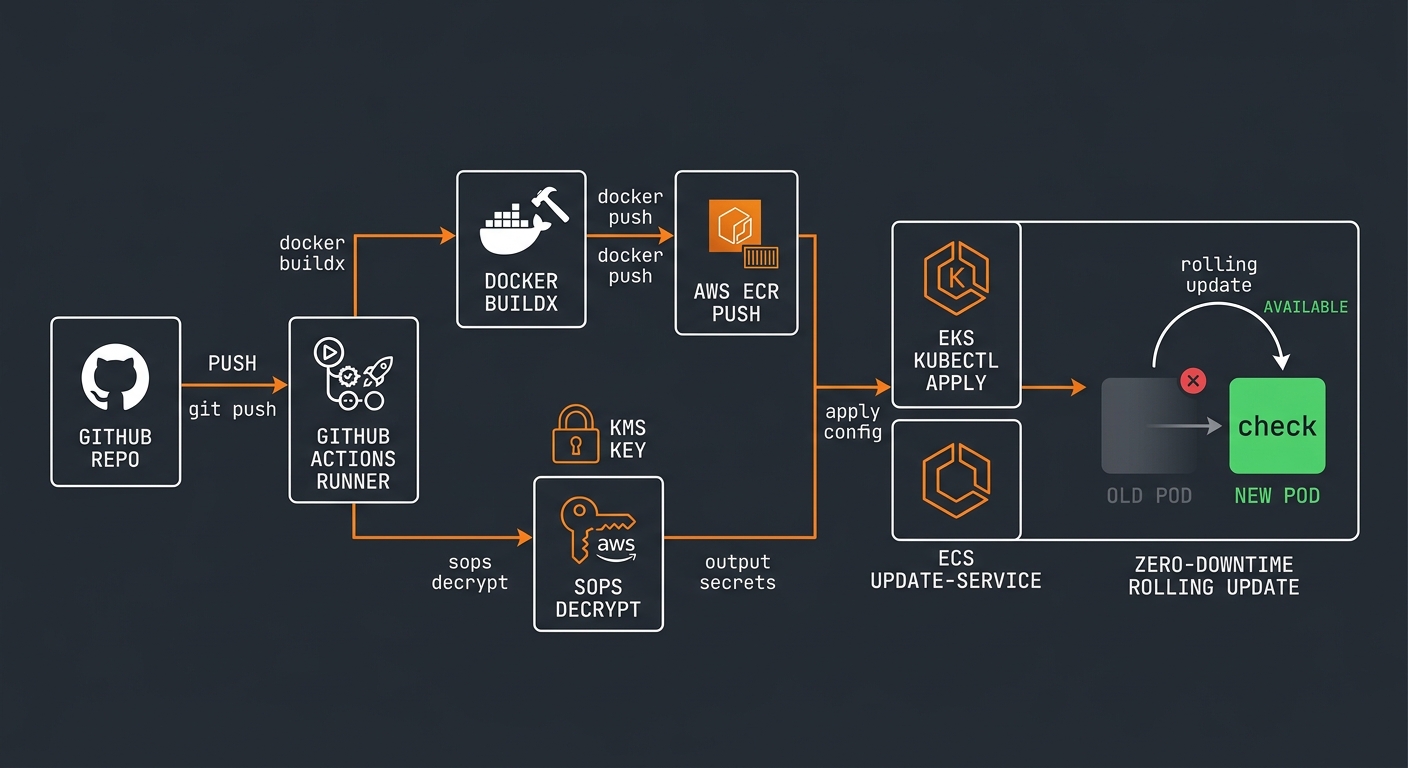

GitOps Deployment Pipeline

The deployment model is declarative and Git-driven. Kubernetes manifests live in deploy/<service>/<env>/ with a standard structure: namespace.yaml, deployment.yaml, service.yaml, env-config.sops.yaml. On merge to main, GitHub Actions runs kubectl apply against the target cluster — decrypting SOPS secrets at runtime using the OIDC-vended KMS key. There is no manual kubectl apply in the deployment lifecycle.

Docker buildx builds for linux/amd64. Image pushed to ECR with the Git commit SHA as the tag: aws ecr get-login-password | docker login --username AWS --password-stdin <registry>. ECR repos have AES256 encryption at rest.

The aws-actions/configure-aws-credentials@v4 action exchanges the GitHub OIDC token for temporary AWS credentials. No secrets stored in GitHub. Credentials scoped to the deploying repo and branch.

SOPS decrypts env-config.sops.yaml using the KMS key. kubectl apply -f deploy/<service>/<env>/ runs against the cluster kubeconfig. Kubernetes rolling update handles zero-downtime deployment — old pods stay up until new pods pass readiness probes.

ECS deployments update the task definition with the new image tag using aws ecs register-task-definition + aws ecs update-service --force-new-deployment. ECS replaces tasks using the minimum-healthy-percent/maximum-percent rolling strategy defined in the service config.

GitOps pipeline — git push triggers Docker Buildx → ECR push in parallel with SOPS KMS decryption, both feeding into EKS kubectl apply and ECS update-service with zero-downtime rolling update

GitOps pipeline — git push triggers Docker Buildx → ECR push in parallel with SOPS KMS decryption, both feeding into EKS kubectl apply and ECS update-service with zero-downtime rolling update

Terraform State and Environment Promotion

Terraform state is stored in S3 (terraform-state-platform bucket, us-east-1) with environment-isolated key prefixes. Each environment (infra-stage/, infra-prod/, dev, stage, prod checkout) has its own state file, preventing cross-environment blast radius from a failed apply. Remote state outputs are consumed across configurations using terraform_remote_state data sources — for example, the checkout environment reads the Route53 zone ID and ACM certificate ARN from the account-level state without duplicating the resource.

What I Would Do Differently

The Terraform state backend has no explicit DynamoDB locking table, which means concurrent terraform apply runs are technically possible. In practice, the team was small enough that this was managed by convention, but at any reasonable team size a DynamoDB lock table should be standard.

Redis logical database partitioning (DB 0–5 on a single node) works in staging but is operationally fragile — a cache flush on one DB index can inadvertently affect another app's broker queue. The correct production pattern is separate ElastiCache clusters per application, or at minimum Redis 7 keyspace isolation with ACLs.

The EKS node group uses t2.large instances. Under sustained CPU credit exhaustion (the t2 burstable model), nodes can degrade unpredictably. m5.large or m6i.large instances with consistent CPU delivery would be more appropriate for production Kubernetes worker nodes handling latency-sensitive workloads.